Table of Contents

ABOUT

I have two main approaches on this site:

– Step-by-Step information

and

– Summaries.

On another site that I publish (Blogger), I have the posts oriented by “Problem vs. Solution”.

So, here you get the information to avoid the problem! ![]()

Resilience & Performance is a vast subject, but you get at least a basic checklist to double-check your project.

This is very helpful to avoid problems.

The intention of this post is to keep evolving because it is a very dynamic area.

If you want to contribute with references, additional information, please, you’re welcome, and let me know using the comments or email to do so.

Additionally, if you see another way of doing things, feel free to add your comment. Always useful. Thanks!

As I’ve said, this is a continuous piece of work.

#KEY POINTS TO RESILIENCE AND PERFORMANCE – THE CHECKLIST

Check the following points, if your web app is making use of.

#HTTP2

#Advantages

Retro-compatibility

HTTP/2 retains the same semantics as HTTP/1.1.

This includes HTTP methods such as GET and POST and the status codes as usual, URLs, fields, etc.

Single, Persistent Connection

Only one connection is used for each web page.

The same connection is used as long as the web page is open.

Multiplexing, Request parallelism & Requests prioritization

Requests and replies are prioritized and multiplexed onto separate streams within the single connection.

Header Compression and Binary Encoding

Uses secure standard, HPACK compression, reducing network traffic.

Header information is sent in a binary compact format, not as plain text.

SSL Encryption

HTTP/2 adds SSL support with better performance.

Server push

HTTP/2 Server Pushallows an HTTP/2-compliant server to send resources to a HTTP/2-compliant client before the client requests them. It is, for the most part, a performance technique that can be helpful in loading resources preemptively.

Wikipedia

#Performance Comparison

Check this dynamic comparison test:

Performance testing HTTP/1.1 vs HTTP/2 vs HTTP/2 + Server Push for REST APIs

#More Detailed Information

CACHE

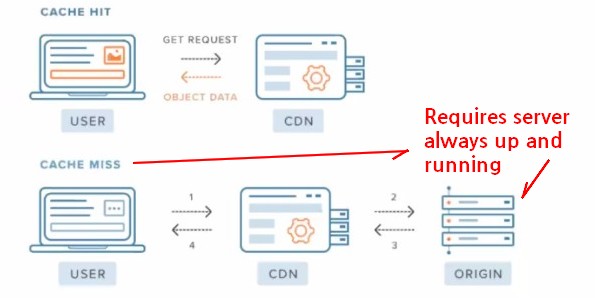

The cache replicates the information with previous traffic and may offer many configurations.

There is a setback when using a cache that must be considered.

They are not recommended for sites where the information traffic is very dynamic, with a low rate of repeatability.

The new information always demands some processing and if the probability to be used again is low, the cache may slow down instead of helping performance.

It is required a fine tunning and careful consideration. It is not a silver bullet.

CDN – CONTENT DELIVERY SERVICE

Think about making use of CDN if your site has become slow, but first check other responsibilities like database latency, code optimization (remember O notation), architecture design, etc.

Then, if still necessary, consider CDN.

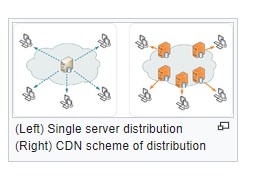

What Is CDN?

A content delivery network, or content distribution network (CDN), is a geographically distributed network of proxy servers and their data centers. The goal is to provide high availability and performance by distributing the service spatially relative to end users. CDNs came into existence in the late 1990s as a means for alleviating the performance bottlenecks of the Internet,[1][2] even as the Internet was starting to become a mission-critical medium for people and enterprises.

CDNs are a layer in the internet ecosystem.

Content owners such as media companies and e-commerce vendors pay CDN operators to deliver their content to their end-users. In turn, a CDN pays Internet service providers (ISPs), carriers, and network operators for hosting its servers in their data centers.

CDN is an umbrella term spanning different types of content delivery services: video streaming, software downloads, web and mobile content acceleration, licensed/managed CDN, transparent caching, and services to measure CDN performance, load balancing, Multi CDN switching and analytics and cloud intelligence. CDN vendors may cross over into other industries like security, with DDoS protection and web application firewalls (WAF), and WAN optimization.

@FROM: Wikipedia

APPLICATION ARCHITECTURE

Design is a huge area, but we may begin with SPA vs SSR.

SPA

The bundles tend to become big and heavy requiring more processing to render the page on the client.

On the other hand, after the initial loading, the application runs smother, lighter.

Easier to tune cache.

SEO optimization loss.

XSS vulnerability requiring careful design.

SSR

Fast loading (page comes ready from the server).

The client is less required, so devices having smaller processing power are able to handle the requisition faster.

Requires cache’s accurate tuning.

Better security (source code on server).

Better SEO performance (faster and better processing).

If not well designed it may require more roundtrips (requests to the server to rerender the page).

I hope this short piece of information, but still big when putting it to work, helps you as it has helped me.

Good coding!

Brazilian system analyst graduated by UNESA (University Estácio de Sá – Rio de Janeiro). Geek by heart.